Can midsized companies compete in an AI-driven market without the budgets, infrastructure, or dedicated machine learning teams that large enterprises rely on?

Generative AI is no longer a future investment. It is becoming a present-day operational layer across customer support, internal knowledge systems, analytics, and content workflows. Yet many midsized organizations hesitate to adopt it because traditional AI deployment demands specialized talent and long development cycles. This is where Amazon Bedrock changes the equation. It enables companies to customize and scale AI applications without managing hardware or training models from scratch.

This guide walks through how Amazon Bedrock enables midsized companies to adopt generative AI securely, without infrastructure complexity, and with a clear path from pilot to production.

What Is Amazon Bedrock?

Amazon Bedrock is a fully managed AWS service that provides secure, API-based access to a range of foundation models within a governed environment. Organizations use it to integrate generative AI capabilities—such as text generation, summarization, semantic search, and conversational interfaces—without building custom training pipelines or managing specialized infrastructure. By connecting Bedrock to internal knowledge repositories, companies ensure that model outputs reflect verified business data rather than general internet knowledge.

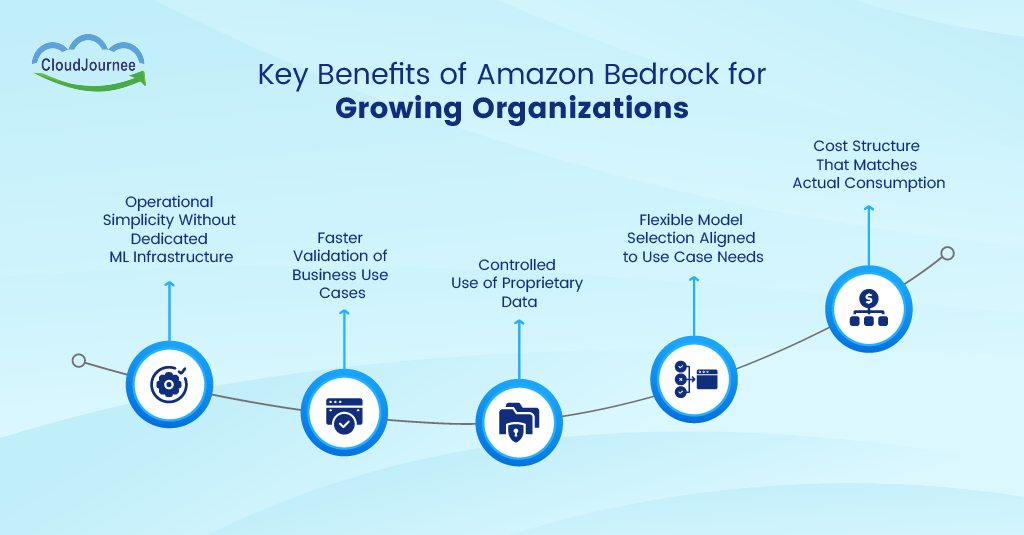

Key Benefits of Amazon Bedrock for Growing Organizations

Amazon Bedrock offers several practical advantages for growing organizations adopting generative AI:

● Operational Simplicity Without Dedicated ML Infrastructure

Bedrock removes the requirement to deploy GPU clusters or manage model hosting layers. Engineering teams integrate AI features through APIs that operate within familiar AWS services. This structure reduces operational risk and shortens architecture planning cycles.

● Faster Validation of Business Use Cases

Organizations can test targeted applications such as knowledge assistants or automated reporting. They can perform this without committing to long development programs. Early pilots generate measurable data that supports investment decisions and prevents unfocused experimentation.

● Controlled Use of Proprietary Data

Companies connect models to internal datasets through retrieval-based methods that reference approved documents. Outputs remain grounded in verified information, strengthening reliability for both customer support and internal analysis.

● Flexible Model Selection Aligned to Use Case Needs

Bedrock provides access to multiple model providers through a unified interface. Teams evaluate performance and cost across models, then align selection with the specific workload rather than adopting a single vendor approach.

● Cost Structure That Matches Actual Consumption

Usage-based pricing ties expenditure to real demand rather than fixed infrastructure investment. Finance and technology leaders gain clearer visibility into return on investment because resource usage correlates directly with deployed applications.

Step-by-Step Guide to Getting Started with Amazon Bedrock

Below is a step-by-step approach to help teams plan and operationalize Amazon Bedrock within their existing AWS environment.

Step 1: Define the First Use Case and Success Metric

A focused use case keeps technical scope controlled and makes value measurable. Teams should identify one workflow that already has clear operational pain, such as support ticket summarization or internal policy Q&A. Success metrics should map to business outcomes such as reduced handling time or higher first-contact resolution. This measurement framework guides model selection and evaluation throughout the rollout.

Step 2: Set Up Access, IAM Boundaries, and Environment Separation

A secure start begins with account and role design. Teams should isolate development and production environments so experiments cannot affect live systems. IAM roles should restrict who can invoke models and who can access data sources. Logging should route into CloudWatch so audit trails exist from day one.

Step 3: Select a Model Based on Task Requirements

Model selection should reflect workload characteristics such as latency targets and output length. Summarization often needs stable instruction following, while chat assistants often need strong conversational control. Teams should run small benchmarks using representative prompts and real business examples. This testing quickly surfaces quality gaps and cost tradeoffs.

Step 4: Design the Data Approach—RAG or Fine-Tuning

RAG works well for knowledge-heavy workflows that depend on internal documents. Fine-tuning fits patterns that require consistent tone or domain-specific formatting. Teams should start with RAG for most enterprise knowledge use cases because it keeps source documents visible and updateable. This approach also supports governance because responses can be traced back to approved content.

Step 5: Build the Retrieval Layer with Embeddings and Controlled Knowledge Sources

A retrieval system starts with document ingestion and chunking rules that match how employees search. Teams should clean documents and apply access control metadata so sensitive content stays restricted. Embeddings then support semantic search across that corpus. A vector store or managed search layer provides retrieval results that feed the model prompt.

Step 6: Create Prompt Templates and Validation Rules

Prompt templates standardize outputs across teams and reduce variance across users. Templates should define role and output format. Guardrails should add requirements such as citing source titles or refusing to answer beyond retrieved content. A validation layer should check for policy violations and unsupported claims before content reaches end users.

Step 7: Deploy Through an Application Layer and Add Observability

An application layer sits between users and the model so controls remain consistent. This layer should handle rate limits and retries. It should also capture telemetry such as latency and token usage. Feedback loops from users then support iterative improvements in prompts, retrieval quality, and document coverage.

Step 8: Run an Evaluation Cycle Before Broad Rollout

Evaluation needs test sets that mirror real queries from the target team. Teams should score factual accuracy and relevance. They should also review failure modes such as hallucinated policy details. A go-live decision should rely on that evidence, and the decision should tie back to the success metrics defined in Step 1.

Step 9: Establish Governance for Ongoing Operations

Governance keeps AI outputs stable over time. Teams should define review owners for documents and prompts. Change control should track updates to chunking rules and retrieval settings. Cost monitoring should tie usage to business units so budgeting remains predictable.

Common Use Cases for Midsized Companies

Midsized companies are already applying Bedrock across several high-impact areas:

● Intelligent Customer Support Knowledge Assistants

Support teams often spend time searching across manuals, policies, and historical tickets. Bedrock-powered assistants retrieve verified information from internal repositories and generate context-aware responses that align with company guidelines.

This approach improves response consistency and reduces resolution time because agents rely on curated knowledge rather than manual lookup. Organizations that pilot this model often track improvements in first-response accuracy and reduced escalation rates.

● Internal Research and Document Analysis

Business teams handle large volumes of contracts, reports, and technical documentation. Bedrock applications can analyze approved document collections and return concise summaries or structured insights tied to specific queries. Analysts gain faster access to validated information, which strengthens decision cycles and reduces dependency on manual review. Controlled retrieval keeps responses aligned with source material, which supports auditability.

● Automated Content Drafting for Business Operations

Marketing, HR, and product teams generate recurring content such as proposals and knowledge articles. Bedrock supports structured drafting workflows where outputs follow defined templates and approved terminology. Human review remains part of the process, yet preparation time decreases because teams start from contextual drafts grounded in internal data.

● IT and Operations Knowledge Search

Technical teams manage runbooks, troubleshooting guides, and configuration references stored across multiple systems. Bedrock-based search interfaces allow engineers to query operational knowledge in natural language and receive responses tied to documented procedures. Faster access to validated guidance improves incident response and reduces repeated investigation effort.

● Data Insight Summarization for Business Reporting

Operational dashboards and datasets contain valuable signals but require interpretation before leadership review. Bedrock applications can summarize validated analytics outputs and highlight trends drawn from approved data sources. This capability supports clearer communication between technical analysts and decision-makers while maintaining traceability to underlying reports.

Amazon Bedrock vs Traditional AI Deployment

| Capability | Traditional AI Deployment | Amazon Bedrock |

|---|---|---|

| Infrastructure | Requires GPU setup and capacity planning | Fully managed within AWS |

| Model Access | Limited to custom or single-model pipelines | Multiple foundation models available |

| Deployment Time | Long setup and integration cycles | Rapid API-based rollout |

| Data Integration | Custom pipelines must be built | Connects to existing AWS data services |

| Scalability | Manual scaling and forecasting required | Automatic scaling based on demand |

| Security and Governance | Controls designed separately for each system | Uses AWS-native security and compliance |

| Maintenance | Ongoing infrastructure and model management | Managed by AWS |

| Cost Model | High upfront investment | Pay based on actual usage |

| Skill Requirements | Dedicated ML expertise needed | Usable by cloud and application teams |

Important Considerations Before Implementation

Before moving into implementation, organizations should evaluate the following:

● Define a Clear Business Objective

AI adoption should begin with a measurable operational need rather than a broad technology initiative. A defined objective guides architecture, data selection, and evaluation criteria. Teams gain stronger alignment when outcomes map directly to productivity or service improvements.

● Assess Data Readiness and Access Controls

Reliable outputs depend on well-governed data sources. Organizations should review document quality, ownership, and permission structures before connecting knowledge repositories. Clean and authorized data improves response reliability and supports compliance expectations.

● Plan for Validation and Human Oversight

Generative AI systems require review layers that confirm accuracy and relevance. Human validation remains essential in workflows that affect customers or decision-making. Structured feedback loops help refine prompts and retrieval logic over time.

● Align Security With Existing Cloud Governance

Security policies should extend current AWS identity and monitoring frameworks. Integration with established governance models simplifies audits and reduces operational friction during rollout.

Best Practices for Successful Adoption

These best practices support a structured and sustainable adoption approach:

● Start With Contained Pilot Projects

Small, focused pilots allow teams to validate value and identify risks before scaling. Early wins create internal confidence and provide measurable benchmarks.

● Use Retrieval-Based Architectures for Accuracy

Retrieval-based approaches ground responses in approved knowledge sources. This method improves factual alignment and supports traceability.

● Monitor Cost-Performance Balance Early

Usage patterns should be tracked from the first deployment stage. Teams can refine prompts, model selection, and query design to maintain efficiency.

● Involve Both Technical and Business Stakeholders

Collaboration between technical teams and domain specialists is essential for successful adoption. Business stakeholders define priorities and success criteria, while technical teams ensure those objectives are implemented effectively.

● Build Repeatable AI Workflows Instead of One-Off Experiments

Standardized workflows ensure consistent execution across departments. Reusable patterns simplify governance, reduce duplication, and accelerate onboarding as AI adoption expands across the organization.

The Bottom Line

Amazon Bedrock gives midsized companies a practical path to adopt generative AI without building complex machine learning infrastructure or expanding specialized teams. Its managed architecture allows organizations to focus on solving business problems, such as knowledge access and data interpretation, rather than maintaining models. With controlled data integration, flexible model access, and usage-aligned costs, companies can introduce AI capabilities in a measured and governed manner. This approach supports innovation that remains aligned with security requirements and long-term operational sustainability.

Organizations ready to move from evaluation to production deployment need a partner with deep AWS expertise and applied AI experience. CloudJournee works with midsized teams to design governed architectures and operationalize Amazon Bedrock in ways that deliver measurable business outcomes. Schedule a consultation with CloudJournee to translate your generative AI strategy into production-ready solutions built on your existing AWS foundation.